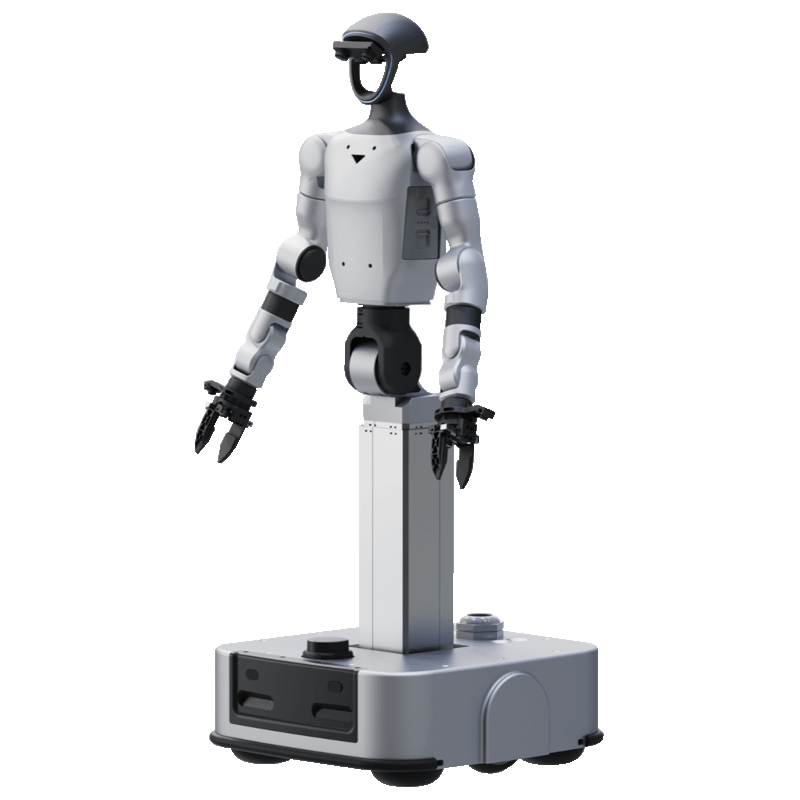

Unitree G1-D

Unitree G1-D

End-to-End Platform for Humanoid Robot

Data & Training

Core Components

|

High-Performance Humanoid Robot Multiple proprietary humanoid embodiments, featuring in-house actuators, gearboxes, encoders, and sensors. |

Streamlined Data Acquisition Tools A unified platform for the complete data pipeline: acquisition, processing, labeling, review, and data asset management. |

Comprehensive Model Training & Inference Tools Enables distributed training, custom model development, and seamless deployment, with full support for all major open-source frameworks. |

Higher-DOF Robot PlatformTotal Degrees of Freedom

Robot DOF (Excl. End-Effector):19 Arm Degrees of Freedom:7×2 Waist Degrees of Freedom:2 Column Degrees of Freedom:1 Base Degrees of Freedom:2 |

Expanded Operational WorkspaceMobile Operation : Adopting a mobile lifting design that combines wheels and lifting mechanisms Vertical Workspace : 0-2m Waist ROM (Z) : ±155° Waist ROM (Y) : -2.5° ~ +135° |

Lower-Latency Control ResponseLifting Accuracy :±0.5mm End-Effector Gripper Accuracy : ±0.1mm * Note: Accuracy varies with different end-effector configurations. System Teleoperation Latency : ﹤100ms Sampling Rate : 60Hz |

Streamlined Data Acquisition Tools

Streamline data collection and reduce costs with flexible, standardized workflows — moving beyond traditional manual processes.

|

Visual Template Management for Efficient Collection Integrating project management, task allocation, progress tracking, and status analysis into a unified platform. With templated configurations, data collection tasks can be generated with one click. Real-time tracking of the entire workflow ensures smoother collaboration and more efficient data acquisition. |

Flexible Configurations with Diverse Platforms & End Effectors The system supports data collection across various robot platforms and end-effector configurations. Its robust data standardization capability enables end-to-end processing from diverse devices to high-quality model training data. |

|

High-Concurrency Architecture for Scalable Operations Equipped with the technical capability to support hundreds of robots performing synchronous data collection. Through high-concurrency architecture and load-balanced scheduling, the platform ensures real-time reception and processing of massive data streams. |

24/7 Online Collection, Stable and Reliable Built on a highly available service architecture, the platform enables reliable 24/7 data collection. With extensive format compatibility, collected data can be directly output or converted into mainstream training formats, accelerating R&D efficiency. |

Comprehensive Model Training & Inference Tools

It supports an end-to-end workflow from data processing to one-click model deployment.The platform seamlessly integrates a variety of mainstream open-source robotic model frameworks.

|

Rich Ecosystem with Mainstream Model Support An open model ecosystem is established, featuring built-in community datasets and support for open-source dataset training. It offers deep integration with leading open-source models such as PI and GROOT. |

Simulation Environment for Model Evaluation Includes a high-fidelity, high-precision 3D asset library. By constructing realistic simulation environments, it rapidly generates comprehensive evaluation schemes to support algorithm validation. |

|

User-Friendly Design for Rapid Deployment Fully deployable out-of-the-box, minimizing setup time.minimizing setup time. Launch model development instantly via "one-click training," leverage integrated simulation tools for reliable evaluation, and achieve seamless migration from algorithms to real-world machines. |

Distributed Training for High Efficiency Built on a high-performance distributed training architecture, the platform enables elastic scheduling of computing tasks and parallel acceleration. It dynamically scales based on workload resources, achieving up to 90% GPU utilization. |

UnifoLM-WMA-0:

A World-Model-Action (WMA) Framework

Decision-Making Mode:

Action Generation Powered by Precise Prediction

By analyzing the current environmental state and task objectives, the system accurately predicts future physical interactions between the robot and its surroundings. These predictive insights directly assist the policy module in generating actions, effectively reducing decision errors while optimizing the accuracy and rationality of motion execution.

Simulation Mode:

High-Fidelity Feedback for Data Generation

Operates as an interactive simulator capable of generating high-fidelity environmental feedback based on robot motion inputs. By producing high-quality synthetic data, it provides a rich data source for model training and policy optimization, significantly accelerating the learning process.

Product Parameters

|

Model |

G1-D(Standard) |

G1-D(Flagship) |

|

Overall Dimensions(Min. Column Height) |

1260x500x500mm |

1260x525x570mm |

|

Overall Dimensions(Max. Column Height) |

1680x500x500mm |

1680x525x570mm |

|

Total Weight (incl. battery) |

Approx. 50kg |

Approx. 80kg |

|

Total DOF (excl. End Effector) |

17 |

19 |

|

Single Arm DOF (excl. End Effector) |

7 |

7 |

|

Max. Single Arm Payload【1】 |

Approx. 3kg |

Approx. 3kg |

|

End Effector Options【2】 |

Optional 2-Finger Gripper / 3-Finger Dexterous Hand (No Tactile) / 3-Finger Dexterous Hand (With Tactile) / 5-Finger Dexterous Hand |

Optional 2-Finger Gripper / 3-Finger Dexterous Hand (No Tactile) / 3-Finger Dexterous Hand (With Tactile) / 5-Finger Dexterous Hand |

|

Waist DOF |

2 |

2 |

|

Waist Joint Range of Motion |

Z-axis: ±155°, Y-axis: -2.5° to +135° |

Z-axis: ±155°, Y-axis: -2.5° to +135° |

|

Column Lifting Speed |

Approx. 60mm/s |

Approx. 60mm/s |

|

Maximum Mobility Speed |

/ |

1.5m/s |

|

Chassis Drive Type |

/ |

Differential drive, supports 360° in-place rotation |

|

Chassis Sensors |

/ |

LiDAR *1 + Depth Camera *2 + Physical Collision Sensor *2 + Low-Obstacle Detection Sensor *2 |

|

Basic Computing Power |

8-core High-performance CPU |

8-core High-performance CPU |

|

Perception Sensors |

Head HD Binocular Camera *1 + Wrist HD Camera *2 |

Head HD Binocular Camera *1 + Wrist HD Camera *2 |

|

Wi-Fi 6, Bluetooth 5.2 |

Yes |

Yes |

|

High Computing Power Module |

NVIDIA Jetson Orin NX 16GB(100TOPS) |

NVIDIA Jetson Orin NX 16GB(100TOPS) |

|

Battery |

Upper Body Battery(Quick-release): 9Ah |

Chassis Battery (Built-in): 30Ah |

|

Manual Controller |

Yes |

Yes |

|

Visualization Computer |

Yes |

Yes |

|

Battery Life |

Approx. 2 hours |

Approx. 6 hours |

|

Upgraded Intelligent OTA |

Yes |

Yes |

|

Secondary Development【3】 |

Yes |

Yes |

[1] The maximum load of the arm varies greatly under different arm extension postures.

[2] For end-effector selection, please contact our sales team.

[3] For more information, please read the secondary development manual.

[4] For more detailed warranty terms, please read the product warranty brochure.

[5] The above parameters may vary in different scenarios and configurations, please subject to actual situation.

[6] The humanoid robot has a complex structure and extremely powerful power. Users are asked to keep a sufficient safe distance between the humanoid robot and people.Please use with caution

[7] The product appearance is subject to change. Please refer to the final product.

[8] Some sample functions on this page are still being developed and tested, and will be opened to users in the future.

[9] This product is a civilian robot. We kindly request that all users refrain from making any dangerous modifications or using the robot in a hazardous manner.

[10] Please visit Unitree Robotics Website for more related terms and policies, and comply with local laws and regulations.

Options:

- G1D U1 (Standard A) - stationary

- G1D U2 (Standard B) - stationary

- G1D U3 (Standard C) - stationary

- G1D U4 (Standard D) - stationary

- G1D U5 (Standard E) - stationary

- G1D U6 (Flagship A) - wheeled

- G1D U7 (Flagship B) - wheeled

- G1D U8 (Flagship C) - wheeled

- G1D U9 (Flagship D) - Wheeled

- G1D U10 (Flagship E ) - wheeled